Your marketing team installed a visitor identification pixel three months ago. They were excited. They checked the dashboard daily for about a week. Then the novelty wore off, nobody logged in, and the tool started collecting dust.

Meanwhile, hundreds of identified visitors per week sit in a standalone dashboard that nobody checks. Names, emails, companies, page views, intent signals — all rotting behind a login screen.

Sound familiar?

The fix isn’t “check the dashboard more.” It’s piping visitor data directly into the tools your team already uses — your warehouse, your CDP, your CRM, your Slack — programmatically, in real-time, without anyone logging into anything.

This is a RevOps guide. Not a marketing guide. We’re going to wire Leadpipe’s API into your data stack so visitor identification data flows everywhere it needs to go, automatically, the moment someone is identified.

Table of Contents

- The RevOps Data Problem

- What RevOps Actually Needs

- Architecture: Leadpipe in the RevOps Stack

- Pipeline 1: Data Warehouse

- Pipeline 2: CDP (Segment / RudderStack)

- Pipeline 3: CRM (HubSpot / Salesforce)

- Pipeline 4: Slack (Real-Time Alerts)

- Pipeline 5: AI SDR

- Intent Data for RevOps

- Monitoring and Budgeting

- Attribution with Visitor Data

- FAQ

The RevOps Data Problem

Visitor identification tools create a new data silo. Nobody planned it that way, but that’s what happens when the data lives in a vendor dashboard with no programmatic access.

Here’s the pattern we see over and over:

| Problem | Impact |

|---|---|

| Data lives in vendor dashboard | Not in your warehouse, not in your models |

| No programmatic access | Manual CSV exports (that nobody does) |

| No real-time delivery | Data is stale by the time sales sees it |

| No integration with lead scoring | Visitor data is disconnected from your scoring model |

| No attribution connection | Can’t tie anonymous visits to downstream revenue |

The result: 90% of visitor identification data goes unused. Marketing paid for the tool. Marketing installed the pixel. Marketing got excited. But without a data pipeline, the identified visitors never reach the people who can act on them.

This isn’t a visitor identification problem. It’s a data plumbing problem. And plumbing is what RevOps does.

The core issue: Most visitor identification tools were built for marketers who want a dashboard. RevOps teams need an API. They need webhooks. They need structured data flowing into systems they already manage. The tool itself almost doesn’t matter — what matters is whether you can get the data out.

What RevOps Actually Needs

Before we get into architecture, let’s define the requirements. A visitor identification tool that works for RevOps needs five things:

-

API access to query data programmatically — Pull identified visitors by email, page, timeframe, or domain. Feed the data into scripts, pipelines, and models without touching a browser.

-

Real-time webhooks — Stream identifications to other systems the moment they happen. No polling. No batch delays. Event-driven delivery.

-

Bulk export for warehouse loading — Historical data access with pagination for backfilling warehouse tables.

-

Credit and usage monitoring — Programmatic access to consumption data for budgeting, forecasting, and internal reporting.

-

Intent data for prioritization — Not just “who visited” but “who is actively researching your category across the web” for lead scoring and pipeline forecasting.

Leadpipe’s API surface covers all five. Let’s look at the architecture.

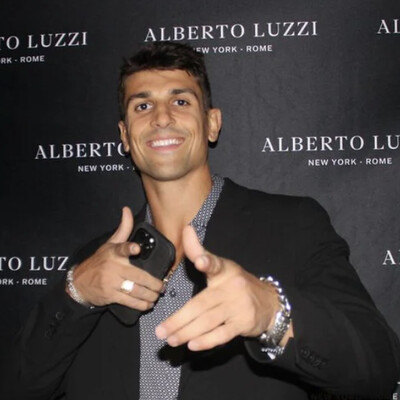

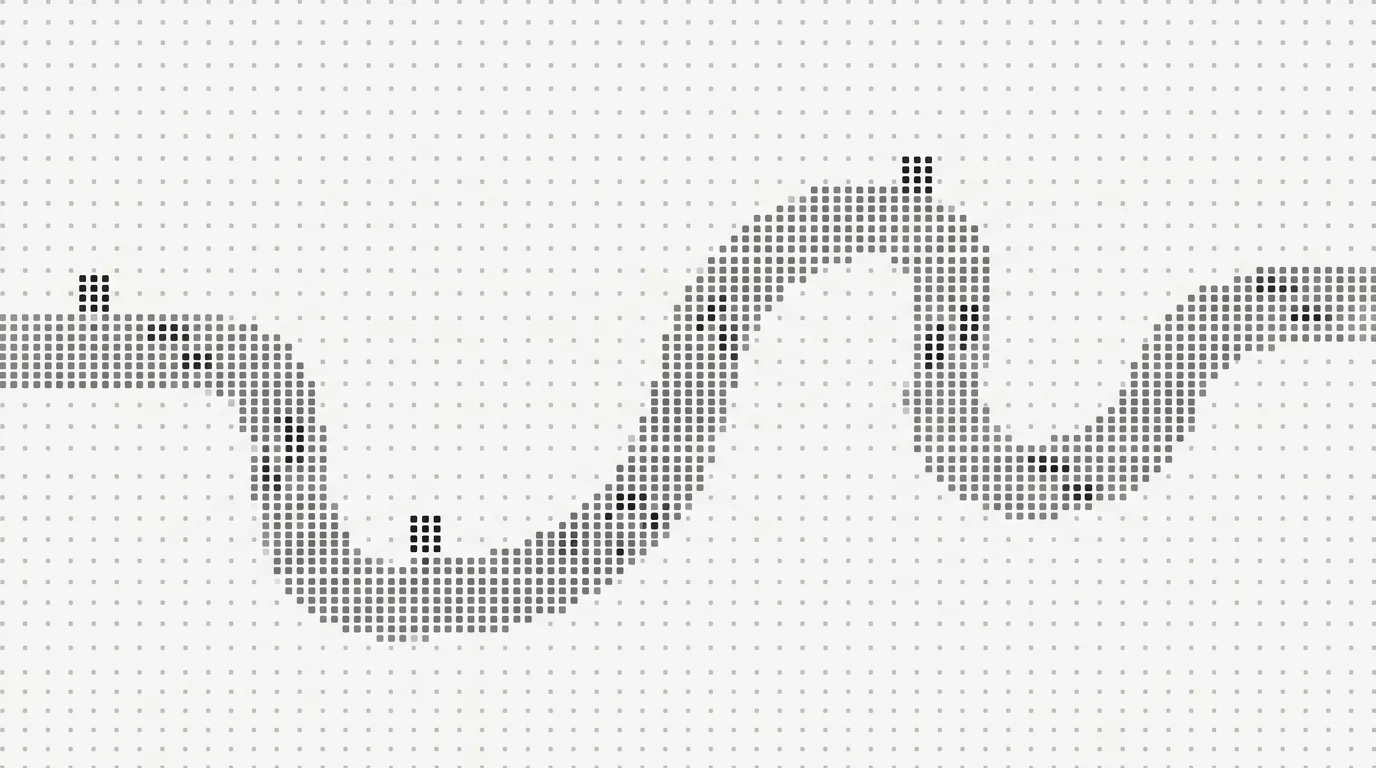

Architecture: Leadpipe in the RevOps Stack

Here’s how Leadpipe fits into a modern RevOps data stack. The pixel captures visitor sessions. The identity graph resolves them. Then the data fans out to every downstream system via API pulls and webhook pushes.

┌──────────────────────────────────────────────────────────────────┐

│ LEADPIPE IN THE REVOPS STACK │

├──────────────────────────────────────────────────────────────────┤

│ │

│ Website Visitor │

│ │ │

│ ▼ │

│ Leadpipe Pixel → Identity Graph → Identification │

│ │ │

│ ├──── Webhook (real-time) ─────┬──────────────────────┐ │

│ │ │ │ │

│ ▼ ▼ ▼ │

│ ┌─────────┐ ┌──────────────┐ ┌─────────┐ │

│ │ Data │ │ CRM │ │ Slack │ │

│ │Warehouse│ │ HubSpot / │ │ Alerts │ │

│ │ (BQ / │ │ Salesforce │ │ │ │

│ │ Snow) │ └──────────────┘ └─────────┘ │

│ └─────────┘ │ │

│ │ │ │

│ ▼ ▼ │

│ ┌─────────┐ ┌──────────────┐ ┌─────────┐ │

│ │ CDP │ │ Enrichment │ │ AI SDR │ │

│ │Segment /│ │ (Clay) │ │ Agent │ │

│ │Rudder │ └──────────────┘ └─────────┘ │

│ └─────────┘ │

│ │ │

│ ▼ │

│ Attribution Model ← Revenue Data ← CRM │

│ │

└──────────────────────────────────────────────────────────────────┘Two data paths:

-

Webhook (push): Real-time. Leadpipe fires a POST to your endpoint the moment a visitor is identified. You configure triggers: First Match (fires once per visitor) or Every Update (fires on each new page view from an identified visitor). This is your real-time channel.

-

API (pull): On-demand. Query

GET /v1/datawith filters for email, page URL, timeframe (24h, 7d, 14d, 30d, 90d, or all), and domain. Returns 50 records per page. This is your backfill and batch channel.

Both paths deliver the same structured visitor payload: person data (name, email, phone, LinkedIn, title), company data (name, industry, size), and behavioral data (pages viewed, referrer, duration, timestamps).

Now let’s build each pipeline.

Pipeline 1: Data Warehouse

Pattern: Webhook → Lambda / Cloud Function → Snowflake or BigQuery

This is the foundation. Get visitor data into your warehouse, and every downstream analysis — attribution, cohort analysis, marketing ROI — becomes possible.

Webhook Receiver (AWS Lambda)

// Lambda function: receives Leadpipe webhook, inserts into BigQuery

const { BigQuery } = require("@google-cloud/bigquery");

const bigquery = new BigQuery();

exports.handler = async (event) => {

const visitor = JSON.parse(event.body);

const row = {

identified_at: visitor.timestamp,

email: visitor.email,

name: visitor.name,

company: visitor.company_name,

title: visitor.job_title,

linkedin: visitor.linkedin_url,

phone: visitor.phone,

page_url: visitor.page_url,

referrer: visitor.referrer,

session_duration: visitor.duration_seconds,

utm_source: visitor.utm_source,

utm_medium: visitor.utm_medium,

utm_campaign: visitor.utm_campaign,

domain: visitor.domain,

};

await bigquery

.dataset("leadpipe")

.table("identified_visitors")

.insert([row]);

return { statusCode: 200, body: "OK" };

};Suggested Warehouse Schema

CREATE TABLE leadpipe.identified_visitors (

identified_at TIMESTAMP,

email STRING,

name STRING,

company STRING,

title STRING,

linkedin STRING,

phone STRING,

page_url STRING,

referrer STRING,

session_duration INT64,

utm_source STRING,

utm_medium STRING,

utm_campaign STRING,

domain STRING

);What You Can Do With This

Once visitor data lives in your warehouse, the analysis options open up:

- Content attribution: Which pages produce the most identified visitors? Which produce the highest-value ones (by eventual deal size)?

- Cohort analysis: Do visitors who view 3+ pages convert at higher rates? Does pricing page traffic from organic search outperform paid?

- Marketing ROI: Connect UTM parameters to identified visitors to downstream revenue. Finally answer “which campaign actually generated pipeline?”

- Visitor trends: Track identification volume over time. Spot spikes after campaigns. Measure the cost of anonymous traffic against identification value.

This is where RevOps earns its keep. The data warehouse pipeline alone justifies the entire integration effort.

Pipeline 2: CDP (Segment / RudderStack)

Pattern: Webhook → Segment identify() + track() calls

If you’re running a CDP, visitor identifications become first-class events in your customer data model. This unlocks cross-channel identity unification, audience building, and personalization.

Webhook Receiver → Segment

const Analytics = require("@segment/analytics-node");

const analytics = new Analytics({ writeKey: process.env.SEGMENT_WRITE_KEY });

exports.handler = async (event) => {

const visitor = JSON.parse(event.body);

// Identify the user in Segment

analytics.identify({

userId: visitor.email,

traits: {

name: visitor.name,

email: visitor.email,

company: visitor.company_name,

title: visitor.job_title,

phone: visitor.phone,

linkedin: visitor.linkedin_url,

},

});

// Track the page visit as an event

analytics.track({

userId: visitor.email,

event: "Visitor Identified",

properties: {

page_url: visitor.page_url,

referrer: visitor.referrer,

session_duration: visitor.duration_seconds,

utm_source: visitor.utm_source,

utm_campaign: visitor.utm_campaign,

identification_source: "leadpipe",

},

});

await analytics.flush();

return { statusCode: 200, body: "OK" };

};Use Cases

| CDP Use Case | How It Works |

|---|---|

| Identity unification | Merge anonymous CDP profiles with Leadpipe identifications |

| Audience building | Create audiences of “identified pricing page visitors” for retargeting |

| Personalization | Personalize on-site experience for identified return visitors |

| Cross-channel orchestration | Trigger email sequences, ad audiences, and outreach from one event |

The key insight: your CDP probably already has anonymous sessions from these visitors. When Leadpipe identifies them, the identify() call stitches the anonymous profile to a real person. Every previous anonymous page view suddenly has a name attached.

Pipeline 3: CRM (HubSpot / Salesforce)

Leadpipe has native integrations for HubSpot and Salesforce — if you want zero-code setup, those work out of the box. But RevOps teams often want more control: custom field mapping, deduplication logic, conditional routing.

Here’s the API pattern for any CRM.

Webhook → HubSpot (Custom)

const hubspot = require("@hubspot/api-client");

const client = new hubspot.Client({ accessToken: process.env.HUBSPOT_TOKEN });

exports.handler = async (event) => {

const visitor = JSON.parse(event.body);

// Check if contact already exists

let contact;

try {

const search = await client.crm.contacts.searchApi.doSearch({

filterGroups: [{

filters: [{

propertyName: "email",

operator: "EQ",

value: visitor.email,

}],

}],

});

contact = search.results[0];

} catch (e) {

contact = null;

}

const properties = {

email: visitor.email,

firstname: visitor.name?.split(" ")[0],

lastname: visitor.name?.split(" ").slice(1).join(" "),

company: visitor.company_name,

jobtitle: visitor.job_title,

phone: visitor.phone,

hs_lead_status: "NEW",

leadpipe_source_page: visitor.page_url,

leadpipe_referrer: visitor.referrer,

leadpipe_identified_at: visitor.timestamp,

};

if (contact) {

// Update existing contact -- don't overwrite CRM-managed fields

await client.crm.contacts.basicApi.update(contact.id, {

properties: {

leadpipe_source_page: visitor.page_url,

leadpipe_identified_at: visitor.timestamp,

},

});

} else {

// Create new contact

await client.crm.contacts.basicApi.create({ properties });

}

return { statusCode: 200, body: "OK" };

};Field Mapping

| Leadpipe Field | HubSpot Property | Salesforce Field |

|---|---|---|

email | email | Email |

name | firstname / lastname | FirstName / LastName |

company_name | company | Company |

job_title | jobtitle | Title |

page_url | leadpipe_source_page (custom) | LeadSource_Page__c (custom) |

referrer | leadpipe_referrer (custom) | LeadSource_Referrer__c (custom) |

phone | phone | Phone |

Deduplication is critical. Always search for existing contacts before creating new ones. The last thing your sales team needs is duplicate records flooding their CRM. The Leadpipe + Clay + HubSpot integration guide covers this pattern in depth, including waterfall enrichment for filling in missing fields before the data hits your CRM.

Pipeline 4: Slack (Real-Time Alerts)

This is the highest-ROI pipeline you can build. It takes 15 minutes, requires no infrastructure, and puts identified visitor data directly in front of the people who can act on it.

High-Intent Visitor Alert

exports.handler = async (event) => {

const visitor = JSON.parse(event.body);

// Only alert on high-intent pages

const highIntentPages = ["/pricing", "/demo", "/contact", "/case-studies"];

const isHighIntent = highIntentPages.some((p) =>

visitor.page_url.includes(p)

);

if (!isHighIntent) return { statusCode: 200, body: "Skipped" };

const slackMessage = {

blocks: [

{

type: "header",

text: {

type: "plain_text",

text: "🔥 High-Intent Visitor Identified",

},

},

{

type: "section",

fields: [

{ type: "mrkdwn", text: `*Name:*\n${visitor.name}` },

{ type: "mrkdwn", text: `*Company:*\n${visitor.company_name}` },

{ type: "mrkdwn", text: `*Title:*\n${visitor.job_title}` },

{ type: "mrkdwn", text: `*Page:*\n${visitor.page_url}` },

{ type: "mrkdwn", text: `*Email:*\n${visitor.email}` },

{

type: "mrkdwn",

text: `*LinkedIn:*\n<${visitor.linkedin_url}|Profile>`,

},

],

},

],

};

await fetch(process.env.SLACK_WEBHOOK_URL, {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify(slackMessage),

});

return { statusCode: 200, body: "Sent" };

};Alert Types Worth Building

| Alert | Trigger | Channel |

|---|---|---|

| High-intent visitor | Pricing/demo page + identified | #sales-alerts |

| Target account | Visitor from named account list | AE’s DM |

| Return visitor | Previously identified visitor returns | #sales-alerts |

| Executive visit | C-level or VP title identified | #leadership |

The Slack pipeline converts passive dashboard data into active sales signals. Your AE gets a notification on their phone while the prospect is still on the site. Response time drops from “maybe next week” to “within minutes.”

Pipeline 5: AI SDR

The most powerful pipeline: identified visitors flow directly into an AI agent that researches the prospect, drafts personalized outreach, and sends it — all within minutes of the identification.

The Pattern

Webhook → Intent Classification → AI Personalization → SendThe visitor identification provides the who and what (who visited, what they looked at). Your AI agent adds the why (company context, recent news, pain points) and generates personalized outreach that references the specific pages they viewed.

We’ve written two detailed implementation guides for this pipeline:

- Feed Real-Time Visitor Data Into Your AI Agent — Full code walkthrough with LangChain, CrewAI, and custom Python agents

- The AI SDR Data Stack: Visitor to Booked Meeting — The complete 6-layer pipeline with tools, costs, and conversion rates at every stage

The short version: teams running this pattern see 3-5x higher response rates compared to cold outbound because the outreach is timely (sent within minutes of a visit), relevant (references specific pages viewed), and targeted (only sent to visitors showing real intent). The data layer that most AI sales agents are missing is exactly this — real-time visitor identity.

Intent Data for RevOps

Visitor identification tells you who visited your site. Leadpipe’s Orbit API tells you who is researching your category across the entire web — even if they’ve never visited your site.

20,000+ intent topics. Person-level data. ICP filtering. Daily audience runs.

For RevOps, intent data is a force multiplier for lead scoring, pipeline forecasting, and marketing strategy.

How to Use It

| RevOps Use Case | Implementation |

|---|---|

| Lead scoring | Feed intent scores (1-100) into your scoring model. A visitor with an intent score of 85 on “CRM Software” is more valuable than a visitor with no cross-web intent signal. |

| Pipeline forecasting | Monitor daily audience sizes for your key topics. Rising audience counts signal growing market demand. Declining counts signal saturation. |

| Marketing strategy | Track which topics are trending via the /v1/intent/topics/movers endpoint. Inform content strategy with actual demand data. |

| Audience export | Export person-level audiences as CSV for warehouse loading or direct outreach. Build saved audiences that refresh daily. |

| ICP validation | Use audience preview counts to validate ICP definitions before committing credits. |

Quick Example: Pull Intent Audience

import requests

resp = requests.post(

"https://api.aws53.cloud/v1/intent/topics/audience",

headers={"X-API-Key": "sk_your_key"},

json={

"topicIds": ["topic_crm_software"],

"filters": {

"seniority": ["VP", "C-Suite", "Director"],

"companySize": ["51-200", "201-500"],

"industry": ["Software", "SaaS"]

},

"minScore": 70

}

)

audience = resp.json()

for person in audience["data"]:

print(f"{person['name']} ({person['title']} at {person['company']}) "

f"- Intent: {person['score']}/100")Intent data closes the gap between “who visited” and “who is actively buying.” When you combine visitor identification with cross-web intent signals, your lead scoring model has two independent data sources confirming buyer interest. That’s how you prioritize the pipeline.

Monitoring and Budgeting

RevOps owns budgets. You need to know how fast identification credits are being consumed, when they reset, and whether the pixels are healthy — programmatically, not by logging into a dashboard.

The Account Health Endpoint

GET /v1/data/account returns everything you need:

curl -H "X-API-Key: sk_your_key" \

https://api.aws53.cloud/v1/data/account{

"credits": {

"used": 347,

"limit": 500,

"remaining": 153,

"percentUsed": 69.4,

"resetsAt": "2026-05-01T00:00:00Z"

},

"pixels": {

"total": 3,

"active": 2,

"paused": 1

},

"intentSlots": 5,

"healthy": true

}Build Internal Monitoring

import requests

def check_leadpipe_health():

resp = requests.get(

"https://api.aws53.cloud/v1/data/account",

headers={"X-API-Key": "sk_your_key"}

)

account = resp.json()

# Alert if credits are running low

if account["credits"]["percentUsed"] > 80:

send_slack_alert(

f"⚠️ Leadpipe credits at {account['credits']['percentUsed']}% "

f"({account['credits']['remaining']} remaining). "

f"Resets {account['credits']['resetsAt']}"

)

# Alert if any pixel is unhealthy

if not account["healthy"]:

send_slack_alert(

"🚨 Leadpipe account health check FAILED. "

"Check pixel status and configuration."

)

# Alert if pixels are paused unexpectedly

if account["pixels"]["paused"] > 0:

send_slack_alert(

f"⏸️ {account['pixels']['paused']} Leadpipe pixel(s) paused. "

f"Active: {account['pixels']['active']}/{account['pixels']['total']}"

)

return accountRun this on a daily cron. Push the data into your internal reporting dashboard. Now finance knows exactly what the tool costs per identified visitor, when the next billing cycle resets, and whether the pricing tier still fits your traffic volume.

Attribution with Visitor Data

This is where it gets interesting for RevOps. Visitor identification data lets you connect anonymous website visits to downstream revenue — closing the loop that every attribution model struggles with.

The Attribution Pattern

Visit (anonymous) → Identification → CRM Contact → Deal Created → Deal Closed

│ │ │

▼ ▼ ▼

referrer identified_at closed_won_date

page_url email deal_amount

utm_source company

utm_campaignWhen a visitor is identified, you capture three pieces of attribution data that most tools miss:

referrer— Traffic source. Did they come from Google organic, a LinkedIn ad, a partner referral? This is first-touch channel attribution.page_url— Content attribution. Which page did they land on? Which page was the identification trigger? This tells you which content converts.utm_source/utm_campaign— Campaign attribution. If the visit came from a paid campaign, you can tie campaign spend directly to identified leads and eventual revenue.

The SQL Query

Once visitor data is in your warehouse (Pipeline 1), join it against CRM deal data:

SELECT

v.utm_source,

v.utm_campaign,

v.page_url,

COUNT(DISTINCT v.email) AS identified_visitors,

COUNT(DISTINCT d.deal_id) AS deals_created,

SUM(d.deal_amount) AS pipeline_value,

SUM(CASE WHEN d.stage = 'closed_won' THEN d.deal_amount ELSE 0 END) AS revenue

FROM leadpipe.identified_visitors v

LEFT JOIN crm.deals d ON v.email = d.contact_email

WHERE v.identified_at >= '2026-01-01'

GROUP BY v.utm_source, v.utm_campaign, v.page_url

ORDER BY revenue DESC;This query answers the question every CMO asks: “Which channels and content are actually generating revenue?” Not pageviews. Not form fills. Revenue. And visitor identification is the bridge that makes it possible — it connects anonymous web sessions to real people who become real customers.

For a deeper analysis of what anonymous traffic actually costs your business, and how enrichment APIs can fill in the gaps that identification alone can’t cover, those guides go deeper into the economics.

FAQ

How much engineering effort does this take?

Each pipeline is a single serverless function — 30-80 lines of code. If you’re using Leadpipe’s native HubSpot or Salesforce integrations, the CRM pipeline requires zero code. The warehouse and CDP pipelines take an afternoon to build and test. The Slack pipeline takes 15 minutes.

Can I use webhooks and the API together?

Yes, and you should. Webhooks handle real-time delivery (Slack alerts, CRM creation, AI SDR triggers). The API handles backfills, historical queries, and batch analysis. They’re complementary, not either/or.

What webhook triggers are available?

Two triggers: First Match fires once when a visitor is first identified. Every Update fires on every subsequent page view from an already-identified visitor. For CRM and warehouse pipelines, use First Match to avoid duplicates. For Slack alerts and intent-based routing, Every Update gives you ongoing behavioral signals.

How do I handle rate limits in batch pipelines?

The API allows 200 requests per minute. The GET /v1/data endpoint returns 50 records per page. For a full historical backfill, paginate through results with reasonable delays between requests. For ongoing pipelines, webhooks eliminate the need for polling entirely.

Start Building

The entire point of this guide is one idea: visitor identification data is only valuable if it reaches the systems where your team works. A dashboard nobody checks is worse than no data at all — it gives the illusion of value while producing none.

The five pipelines above cover every major RevOps use case. Start with the one that has the highest impact for your team:

- Warehouse if you need attribution and analytics

- CRM if your sales team needs leads immediately

- Slack if you want the fastest possible win (15 minutes)

- AI SDR if you’re building autonomous outreach

- CDP if you’re running cross-channel orchestration

Leadpipe offers a free trial with 500 identified leads — enough to build and test every pipeline in this guide. Sign up here and grab your API key from Dashboard > Settings > API Keys.

If you want the full API reference before you start building, the complete developer guide covers every endpoint, and the 5-minute quickstart gets you from zero to your first API call in minutes.

Related Articles

- Visitor Identification API: Complete Developer Guide — Every endpoint, authentication pattern, and code example for building with visitor identification data.

- Leadpipe API in 5 Minutes: Identity Data Made Simple — The fastest path from API key to first data pull, with examples in Python, JavaScript, and cURL.

- How to Add Visitor Identification to Your Clay Waterfall — Connect Leadpipe to Clay for automated enrichment of identified visitors.

- Leadpipe Intent API: 20,000 Topics, Person-Level — Full walkthrough of the Orbit intent API for building audiences of in-market buyers.

- The AI SDR Data Stack: Visitor to Booked Meeting — The complete 6-layer pipeline from anonymous visitor to booked meeting.

- Identity Data as a Service: API-First Visitor Identification — Why API-first architecture matters for platforms building on visitor identification data.

- Leadpipe + Clay + HubSpot Integration Guide — Zero-to-pipeline setup for the most popular RevOps integration stack.