Your AI sales agent has access to a contact database. A CRM. Maybe even intent data from Bombora or G2. It can research prospects, draft emails, and book meetings.

But it doesn’t know that a VP of Marketing just spent 4 minutes on your pricing page. Right now. This second.

Real-time visitor identity is the highest-signal data your agent isn’t using. Someone actively browsing your pricing page is worth more than 10,000 cold contacts sitting in a database. And connecting that signal to your AI agent takes about 20 lines of code.

This guide walks through the entire implementation - from receiving a webhook to generating personalized outreach - with working code you can deploy today.

Table of Contents

- The Architecture

- Prerequisites

- Step 1: Set Up the Webhook Receiver

- Step 2: Parse the Visitor Payload

- Step 3: Classify Intent Level

- Step 4: Build Agent Context

- Step 5: Generate Personalized Outreach

- Step 6: Route the Action

- Alternative: LangChain Integration

- Alternative: CrewAI Multi-Agent Setup

- Performance Tips

- The Numbers

- FAQ

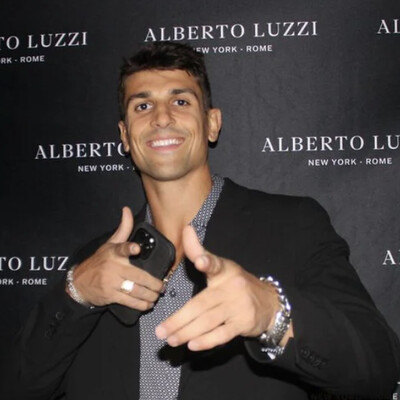

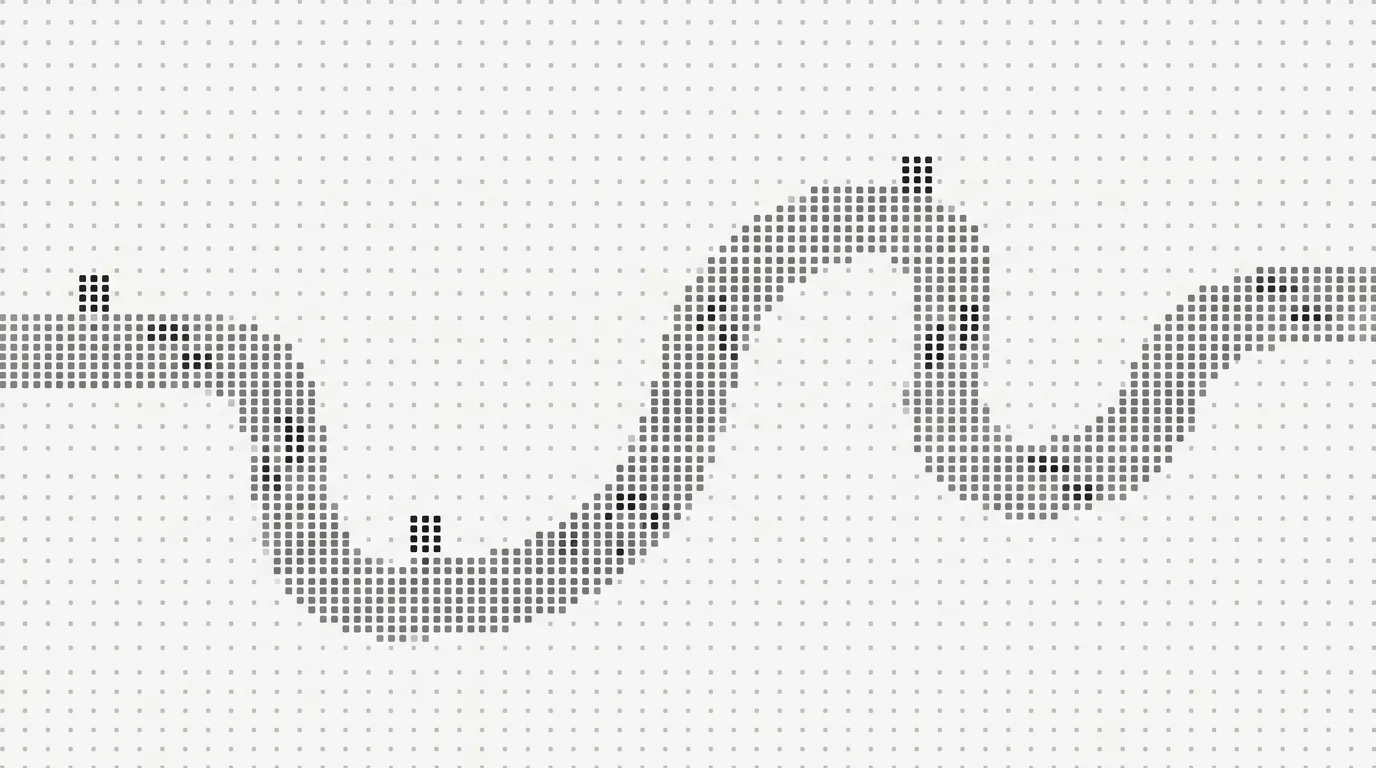

The Architecture

Before writing code, let’s look at what we’re building. The data flow is straightforward:

Website Visitor → Leadpipe Pixel → Identification → Webhook → Your Agent → Action

↓

[name, email, company,

job title, LinkedIn,

pages viewed, duration]A visitor lands on your site. Leadpipe’s pixel captures the session. The identity graph resolves the anonymous visitor into a real person using deterministic matching - no statistical guessing. A webhook fires to your endpoint with structured JSON containing everything your agent needs: who the person is, what they looked at, how long they stayed.

Your agent receives that payload, classifies intent, builds context, generates a personalized response, and routes the action. The whole cycle - from page visit to personalized email draft - takes seconds.

The key insight: your agent doesn’t need to poll or check for new visitors. The data gets pushed to it the moment someone is identified. Event-driven, not batch-processed.

Prerequisites

Before you start, you’ll need:

- Leadpipe account + API key - Sign up here (free trial includes 500 identified leads). Enable webhook delivery in your dashboard settings. If you’re building this into a platform, check the API developer guide for programmatic pixel management.

- Python 3.9+ - All examples use Python, but the patterns work in any language.

- OpenAI API key - We’ll use GPT-4o for outreach generation. You can swap in Anthropic’s Claude, Mistral, or any other LLM.

- FastAPI - For the webhook receiver. Flask works too, but FastAPI handles async natively which matters for real-time processing.

Install dependencies:

pip install fastapi uvicorn openai python-dotenvSet your environment variables:

export LEADPIPE_WEBHOOK_SECRET="your-webhook-secret"

export OPENAI_API_KEY="sk-your-key-here"Step 1: Set Up the Webhook Receiver

First, you need an endpoint that Leadpipe can POST to whenever a visitor is identified. This is your agent’s ears - without it, everything else is dead on arrival.

from fastapi import FastAPI, Request, HTTPException

from dotenv import load_dotenv

import json

import os

load_dotenv()

app = FastAPI()

visitor_queue = []

@app.post("/webhook/leadpipe")

async def receive_visitor(request: Request):

# Verify the webhook is from Leadpipe

webhook_secret = request.headers.get("x-leadpipe-signature")

if webhook_secret != os.getenv("LEADPIPE_WEBHOOK_SECRET"):

raise HTTPException(status_code=401, detail="Unauthorized")

visitor = await request.json()

visitor_queue.append(visitor)

# Trigger agent processing

await process_visitor(visitor)

return {"status": "received"}Run it locally with:

uvicorn main:app --host 0.0.0.0 --port 8000For development, use ngrok to expose your local server:

ngrok http 8000Copy the ngrok URL (e.g., https://abc123.ngrok.io/webhook/leadpipe) and paste it into your Leadpipe webhook configuration. Every identified visitor will now POST to your endpoint in real time.

Tip: In production, deploy this on a lightweight service - a Railway app, a Render web service, or an AWS Lambda behind API Gateway. The endpoint needs to be always-on and respond within a few seconds.

Step 2: Parse the Visitor Payload

Leadpipe’s webhook delivers a structured JSON payload with everything your agent needs to make decisions. Here’s the complete field reference:

| Field | Type | Use in Agent Context |

|---|---|---|

email | string | Primary identifier, outreach target |

first_name | string | Personalization |

last_name | string | Personalization |

company_name | string | Company research, ICP check |

company_domain | string | Enrichment key |

job_title | string | Role-based routing, seniority check |

linkedin_url | string | Research, connection request |

page_url | string | Intent signal (pricing = high intent) |

visit_duration | number | Engagement level (>60s = interested) |

pages_viewed | array | Full browsing path |

referrer | string | Traffic source context |

return_visit | boolean | Repeat visitors = higher intent |

Not every field will be populated on every identification - coverage depends on what’s available in the identity graph. But the core fields (email, name, company, page visited) are consistently present for deterministic matches.

For the full webhook schema, see the Webhook Payload Reference. For now, here’s how to extract the fields you care about:

def parse_visitor(payload):

return {

"email": payload.get("email"),

"first_name": payload.get("first_name", ""),

"last_name": payload.get("last_name", ""),

"company_name": payload.get("company_name", "Unknown"),

"company_domain": payload.get("company_domain", ""),

"job_title": payload.get("job_title", ""),

"linkedin_url": payload.get("linkedin_url", ""),

"page_url": payload.get("page_url", ""),

"visit_duration": payload.get("visit_duration", 0),

"pages_viewed": payload.get("pages_viewed", []),

"return_visit": payload.get("return_visit", False),

}Step 3: Classify Intent Level

Not every visitor deserves the same treatment. Someone who bounced off your homepage in 8 seconds is fundamentally different from someone who spent 4 minutes on your pricing page and then visited a case study.

Your agent needs a classification layer that routes visitors into intent buckets:

def classify_intent(visitor):

page = visitor.get("page_url", "")

duration = visitor.get("visit_duration", 0)

is_return = visitor.get("return_visit", False)

pages = visitor.get("pages_viewed", [])

# Pricing or demo page = ready to buy

if "/pricing" in page or "/demo" in page:

return "high"

# Deep research on case studies

if "/case-stud" in page and duration > 60:

return "high"

# Return visitor browsing multiple pages

if is_return and len(pages) >= 3:

return "high"

# Engaged blog reader

if "/blog" in page and duration > 120:

return "medium"

# Product pages with moderate engagement

if any(p in page for p in ["/features", "/integrations", "/how-it-works"]):

return "medium"

# Everything else

return "low"Why this matters: The intent classification is what separates a useful AI agent from an annoying email cannon. A high-intent visitor gets immediate, personalized outreach. A medium-intent visitor gets value-add content with a 24-hour delay. A low-intent visitor gets added to a nurture list - no outreach at all.

This is the same prioritization logic that the best human SDR teams use. The difference is your agent applies it to every single visitor, in real time, without anyone manually reviewing a dashboard.

High-intent signals to watch for: pricing page views, demo page visits, return visits within 7 days, 3+ pages in a single session, case study consumption over 60 seconds. If you’re using Leadpipe’s intent data via the Orbit API, you can layer topic-level intent (e.g., “researching CRM migration”) on top of page-level behavior for even sharper classification.

Step 4: Build Agent Context

This is where the magic happens. You’re translating raw visitor data into a structured prompt that gives your LLM all the context it needs to generate relevant outreach.

def build_agent_context(visitor, intent_level):

return f"""

You are an SDR for [Your Company]. A visitor was just identified on our website.

VISITOR DATA:

- Name: {visitor['first_name']} {visitor['last_name']}

- Email: {visitor['email']}

- Company: {visitor['company_name']}

- Title: {visitor['job_title']}

- Page visited: {visitor['page_url']}

- Time on page: {visitor['visit_duration']} seconds

- Return visitor: {visitor.get('return_visit', False)}

- Intent level: {intent_level}

INSTRUCTIONS:

- If high intent: Draft a personalized email referencing their specific page visit and role.

- If medium intent: Draft a value-add email with relevant content, no hard sell.

- If low intent: Do not draft outreach. Return a JSON object with nurture=true.

RULES:

- Write a 3-sentence email that feels human, not automated.

- Reference what they were looking at without being creepy or surveillance-y.

- Never say "I saw you visited our website" - instead, reference the topic they researched.

- Match the tone to their seniority: executives get concise, ICs get detailed.

- Include a soft CTA (question, not a calendar link).

"""A few critical things to notice:

Don’t be creepy. “I noticed you spent 4 minutes and 23 seconds on our pricing page at 2:47 PM” will get you blocked. “We just published a comparison of our growth plan vs. our enterprise plan - thought it might be useful given your team size” is the same signal, wrapped in value.

Role-based tone. A VP gets a 3-line email. A developer gets technical specifics. Your prompt should instruct the LLM to adjust based on the job_title field.

Return visitor awareness. If return_visit is true, the outreach should acknowledge a research journey - “You’ve been digging into [topic] - happy to answer questions” - rather than acting like a first touch.

Step 5: Generate Personalized Outreach

Now connect the context to an LLM. We’re using OpenAI here, but the pattern is identical for any provider.

from openai import OpenAI

client = OpenAI()

async def generate_outreach(visitor, intent_level):

context = build_agent_context(visitor, intent_level)

response = client.chat.completions.create(

model="gpt-4o",

messages=[

{"role": "system", "content": context}

],

temperature=0.7,

max_tokens=500,

)

return response.choices[0].message.contentTemperature at 0.7 gives you variation between emails (so 10 outreach emails to 10 pricing page visitors don’t sound identical) while keeping the output coherent. Drop it to 0.3 if you want tighter control. Raise it to 0.9 if your agent’s emails are too formulaic.

Cost note: Each outreach generation with GPT-4o costs roughly $0.01-0.03. Even at 100 visitors per day, you’re looking at $1-3/day in LLM costs. Negligible compared to the cost of an SDR’s time - or the cost of paying an AI SDR platform $5-10K/month.

Step 6: Route the Action

The final step ties everything together. Based on intent classification, your agent takes different actions:

async def process_visitor(visitor):

intent = classify_intent(visitor)

if intent == "high":

email = await generate_outreach(visitor, intent)

await send_email(visitor["email"], email)

await notify_slack(

f"High-intent: {visitor['first_name']} {visitor['last_name']} "

f"({visitor['job_title']} at {visitor['company_name']}) "

f"on {visitor['page_url']}"

)

elif intent == "medium":

email = await generate_outreach(visitor, intent)

await add_to_sequence(visitor, email, delay_hours=24)

else:

await add_to_crm(visitor, status="nurture")For the helper functions - send_email, notify_slack, add_to_sequence, add_to_crm - you’ll wire up your own integrations. Common choices:

- Email sending: Resend, SendGrid, or direct SMTP

- Slack notifications: Slack Incoming Webhooks (one POST request)

- Sequences: Instantly, Smartlead, or Apollo

- CRM: HubSpot or Salesforce API (or use the Leadpipe + Clay + HubSpot integration for a no-code path)

Here’s a quick Slack notification example:

import httpx

async def notify_slack(message):

webhook_url = os.getenv("SLACK_WEBHOOK_URL")

async with httpx.AsyncClient() as client:

await client.post(webhook_url, json={"text": message})That’s it. Six steps: receive, parse, classify, contextualize, generate, route. The entire core logic fits in under 100 lines of Python.

Try Leadpipe free with 500 leads →

Alternative: LangChain Integration

If you’re already building with LangChain, you can wrap the visitor data feed as a custom tool that your agent can call during its reasoning loop.

from langchain.tools import tool

from langchain.agents import AgentExecutor, create_openai_tools_agent

from langchain_openai import ChatOpenAI

@tool

def get_latest_visitor() -> dict:

"""Retrieve the most recently identified website visitor from Leadpipe."""

if visitor_queue:

return visitor_queue.pop(0)

return {"status": "no new visitors"}

@tool

def classify_visitor_intent(visitor_data: str) -> str:

"""Classify a visitor's intent level based on their page views and behavior."""

visitor = json.loads(visitor_data)

return classify_intent(visitor)

@tool

def draft_outreach_email(context: str) -> str:

"""Draft a personalized outreach email given visitor context."""

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "system", "content": context}],

temperature=0.7,

)

return response.choices[0].message.content

# Create agent with tools

llm = ChatOpenAI(model="gpt-4o", temperature=0)

tools = [get_latest_visitor, classify_visitor_intent, draft_outreach_email]

agent = create_openai_tools_agent(llm, tools, prompt_template)

executor = AgentExecutor(agent=agent, tools=tools, verbose=True)

# Run the agent

result = executor.invoke({

"input": "Check for new visitors and draft outreach for any high-intent ones."

})The LangChain approach gives your agent more autonomy - it decides when to check for visitors, how to classify them, and whether to draft outreach. The tradeoff is less deterministic control. For most sales workflows, the explicit routing in Step 6 is more reliable. LangChain shines when you want the agent to chain multiple research steps (e.g., check CRM history, look up the company on LinkedIn, then draft outreach).

Alternative: CrewAI Multi-Agent Setup

CrewAI lets you split the work across specialized agents that collaborate. This is powerful for complex sales workflows where you want research, writing, and routing handled by different “experts.”

from crewai import Agent, Task, Crew

# Agent 1: Researches the visitor

researcher = Agent(

role="Lead Researcher",

goal="Analyze incoming visitor data and determine outreach strategy",

backstory="You are a senior sales analyst who evaluates inbound signals.",

verbose=True,

llm="gpt-4o",

)

# Agent 2: Writes the outreach

writer = Agent(

role="Outreach Writer",

goal="Write personalized, human-sounding emails based on research",

backstory="You are a top-performing SDR known for emails that get replies.",

verbose=True,

llm="gpt-4o",

)

# Agent 3: Decides what to do

router = Agent(

role="Action Router",

goal="Route the outreach to the correct channel and timing",

backstory="You are a sales ops manager who ensures leads get the right touch.",

verbose=True,

llm="gpt-4o",

)

# Define tasks

research_task = Task(

description=f"Analyze this visitor: {json.dumps(visitor)}. Determine intent level and key personalization angles.",

agent=researcher,

expected_output="Intent classification and 3 personalization talking points.",

)

write_task = Task(

description="Using the research, draft a personalized 3-sentence outreach email.",

agent=writer,

expected_output="A ready-to-send email draft.",

)

route_task = Task(

description="Decide: send immediately, delay 24h, or add to nurture. Execute the action.",

agent=router,

expected_output="Action taken with confirmation.",

)

# Run the crew

crew = Crew(

agents=[researcher, writer, router],

tasks=[research_task, write_task, route_task],

verbose=True,

)

result = crew.kickoff()The CrewAI approach is overkill for simple outreach workflows. Where it shines: complex enterprise sales motions where you want one agent to research the company (check their tech stack, recent funding, open roles), another to draft multi-touch sequences, and a third to coordinate timing across email, LinkedIn, and Slack.

Performance Tips

Once the basic pipeline is running, these optimizations will keep it reliable at scale.

Use “First Match” webhook triggers. If a visitor browses five pages, you don’t want five webhook fires and five outreach emails. Configure Leadpipe to fire on first identification only - subsequent page views within the same session should update the visitor record, not re-trigger your agent.

Batch-process during high traffic. If your site gets traffic spikes (product launches, ad campaigns), queue visitors and process in batches every 5 minutes instead of individually. This prevents your LLM costs from spiking and your email-sending service from rate-limiting you.

from asyncio import sleep

async def batch_processor():

while True:

if visitor_queue:

batch = visitor_queue[:20]

visitor_queue[:20] = []

for visitor in batch:

await process_visitor(visitor)

await sleep(300) # Process every 5 minutesCache company research. If three people from Acme Corp visit your site in one week, you don’t need to research Acme Corp three times. Cache company data (firmographics, tech stack, recent news) keyed by company_domain with a 7-day TTL. Tools like Clay’s Claygent or Perplexity API are great for company research - just don’t call them redundantly.

Set excluded paths. Not every page view is worth processing. Configure Leadpipe’s exclusion lists to skip low-value pages like your careers page, privacy policy, or support docs. Fewer irrelevant webhooks = cleaner agent input.

Layer in topic-level intent. Page URLs tell you what someone looked at on your site. Leadpipe’s Orbit API tells you what they’ve been researching across the entire web - covering 20,000+ topics. A visitor on your pricing page who’s also been researching “CRM migration tools” across other sites is a fundamentally different lead than one casually browsing. Feed both signals into your agent context.

Add ICP filtering. Not every identified visitor is a fit. Before generating outreach, check company_name and job_title against your ICP criteria. A student researching your product for a class project doesn’t need an SDR email.

def passes_icp_check(visitor):

excluded_titles = ["student", "intern", "professor", "freelance"]

title = visitor.get("job_title", "").lower()

return not any(exc in title for exc in excluded_titles)The Numbers

Let’s talk cost. Here’s what this custom AI SDR setup actually runs:

| Component | Monthly Cost |

|---|---|

| Leadpipe (Starter, 500 IDs) | $147/mo |

| OpenAI (GPT-4o, ~100 outreach/day) | ~$20/mo |

| Hosting (Railway/Render) | ~$5/mo |

| Email sending (Resend/SendGrid) | ~$10/mo |

| Total | ~$182/mo |

Now compare that to the AI SDR platforms that do essentially the same thing but with a wrapper and a brand name:

| Platform | Monthly Cost | What You Get |

|---|---|---|

| 11x (Alice) | $5,000-10,000/mo | AI SDR with their contact database |

| Artisan (Ava) | $2,400-7,200/mo | AI SDR with 300M contacts |

| AiSDR | ~$900/mo | Multi-channel AI outreach |

| Your custom setup | ~$182/mo | Full control, your data, your logic |

The platform SDRs are great products. But they’re using their contact databases to find prospects who might be interested. You’re using your website traffic to find people who are interested - the ones already visiting your site. That’s a fundamentally different data layer.

And the custom route gives you something the platforms can’t: complete control over the logic. You decide what counts as high intent. You decide the tone and style. You decide when to send and when to hold. No black box.

For a deeper comparison of data providers powering these workflows, see How to Choose a Data Provider for Your AI SDR.

Putting It All Together

Here’s the complete, production-ready script in one file:

from fastapi import FastAPI, Request, HTTPException

from openai import OpenAI

from dotenv import load_dotenv

import httpx

import json

import os

load_dotenv()

app = FastAPI()

client = OpenAI()

visitor_queue = []

WEBHOOK_SECRET = os.getenv("LEADPIPE_WEBHOOK_SECRET")

SLACK_WEBHOOK = os.getenv("SLACK_WEBHOOK_URL")

def classify_intent(visitor):

page = visitor.get("page_url", "")

duration = visitor.get("visit_duration", 0)

is_return = visitor.get("return_visit", False)

pages = visitor.get("pages_viewed", [])

if "/pricing" in page or "/demo" in page:

return "high"

if "/case-stud" in page and duration > 60:

return "high"

if is_return and len(pages) >= 3:

return "high"

if "/blog" in page and duration > 120:

return "medium"

if any(p in page for p in ["/features", "/integrations"]):

return "medium"

return "low"

def passes_icp_check(visitor):

excluded = ["student", "intern", "professor", "freelance"]

title = visitor.get("job_title", "").lower()

return not any(exc in title for exc in excluded)

def build_context(visitor, intent_level):

return f"""You are an SDR. A visitor was just identified on our website.

Name: {visitor['first_name']} {visitor['last_name']}

Company: {visitor['company_name']} | Title: {visitor['job_title']}

Page: {visitor['page_url']} | Duration: {visitor['visit_duration']}s

Intent: {intent_level} | Return visitor: {visitor.get('return_visit', False)}

Write a 3-sentence personalized email. Be human, not robotic.

Reference the topic they researched, not the fact that you tracked them.

End with a question, not a calendar link."""

async def generate_outreach(visitor, intent_level):

context = build_context(visitor, intent_level)

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "system", "content": context}],

temperature=0.7,

max_tokens=500,

)

return response.choices[0].message.content

async def notify_slack(message):

if SLACK_WEBHOOK:

async with httpx.AsyncClient() as http:

await http.post(SLACK_WEBHOOK, json={"text": message})

async def process_visitor(visitor):

if not passes_icp_check(visitor):

return

intent = classify_intent(visitor)

if intent == "high":

email = await generate_outreach(visitor, intent)

# await send_email(visitor["email"], email) # Wire up your sender

await notify_slack(

f"High-intent: {visitor['first_name']} {visitor['last_name']} "

f"({visitor['job_title']} at {visitor['company_name']}) "

f"on {visitor['page_url']}\n\nDraft:\n{email}"

)

elif intent == "medium":

email = await generate_outreach(visitor, intent)

# await add_to_sequence(visitor, email, delay_hours=24)

await notify_slack(

f"Medium-intent: {visitor['first_name']} from "

f"{visitor['company_name']} - queued for 24h delay"

)

else:

# await add_to_crm(visitor, status="nurture")

pass

@app.post("/webhook/leadpipe")

async def receive_visitor(request: Request):

sig = request.headers.get("x-leadpipe-signature")

if sig != WEBHOOK_SECRET:

raise HTTPException(status_code=401)

visitor = await request.json()

await process_visitor(visitor)

return {"status": "received"}Copy that into main.py, fill in your env vars, deploy, and point Leadpipe’s webhook at it. You now have a custom AI SDR running on real-time visitor identity data for under $200/month.

FAQ

How fast does the webhook fire after a visitor is identified?

Typically within seconds of identification. The visitor doesn’t need to leave the page - Leadpipe identifies them during the session and fires the webhook immediately. This means your agent can draft outreach while the visitor is still on your site. Speed matters: research shows that responding within 5 minutes makes you 21x more likely to qualify the lead.

What if the visitor isn’t in my ICP?

Add an ICP filter before the outreach step (see the passes_icp_check function above). Check job title, company size, industry - whatever criteria define your ideal customer. The webhook gives you enough data to filter before spending LLM tokens on outreach generation.

Can I use this with Anthropic’s Claude instead of OpenAI?

Absolutely. Swap the OpenAI client for the Anthropic client and adjust the API call. The prompt structure is identical - Claude is excellent at following structured system prompts for email generation. If anything, Claude tends to produce more natural-sounding outreach out of the box.

What about duplicate visitors? Will the same person trigger multiple outreach emails?

Good question. Use Leadpipe’s “First Match” setting so each person triggers only one webhook per session. On your end, maintain a simple dedup cache (Redis or even a Python dict) keyed by email address with a 7-day TTL. Before processing, check if you’ve already reached out.

How accurate is the visitor identification?

This is the most important question. An independent test scored Leadpipe at 8.7 out of 10 for accuracy, using deterministic matching. Probabilistic tools scored as low as 4.0. When you’re automating outreach with AI, accuracy is non-negotiable - a wrong identification means your agent sends a perfectly crafted email to the wrong person. That’s worse than no email at all.

Can I add this to an existing Clay or Zapier workflow?

Yes. If you’re already using Clay with Leadpipe, you can add an AI outreach step within Clay’s workflow instead of building a custom Python service. The tradeoff: less customization, but faster to set up and no infrastructure to maintain.

What’s Next

You’ve got the building blocks. From here, a few paths worth exploring:

- Add multi-channel routing - LinkedIn connection requests for executives, email for managers, Slack alerts for enterprise accounts

- Build a feedback loop - Track which emails get replies and feed that data back into your prompt to improve over time

- Layer in cross-site intent data - Know what visitors are researching across 20,000+ topics before they even land on your site

- Scale to a full autonomous pipeline - Wire this into the complete AI SDR data stack for end-to-end visitor-to-meeting automation

The visitor identity layer is what makes the rest of the stack work. Without it, your AI agent is guessing. With it, every outreach is grounded in real behavior from real people on your site.

Start your free trial - 500 identified leads, no credit card required →

Related Articles

- The Data Layer AI Sales Agents Are Missing

- Build a Custom AI SDR With Leadpipe and OpenAI

- AI SDR Data Stack: Anonymous Visitor to Booked Meeting

- Visitor Identification API: Complete Developer Guide

- Webhook Payload Reference: Every Visitor Data Field

- How to Choose a Data Provider for Your AI SDR

- Add Identity Resolution to Your SaaS in 10 Minutes